TikTok allegedly remixed Finji’s ads with racist, sexualized AI art

Game intel

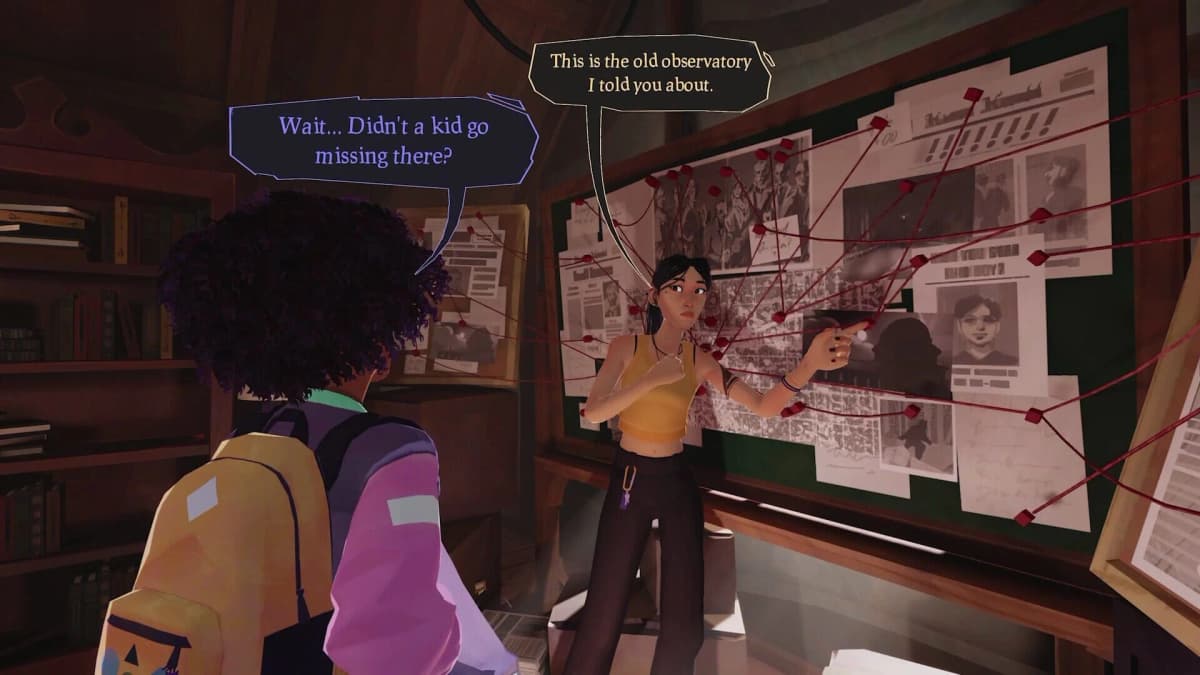

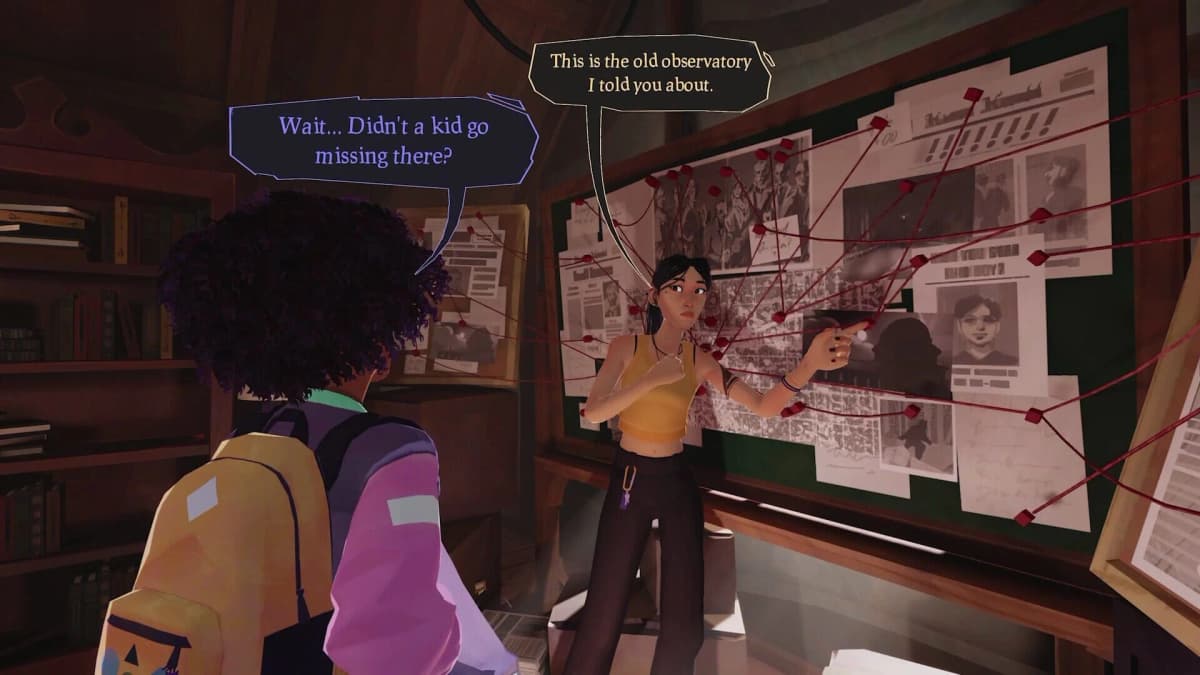

Usual June

Hang out, fight monsters, and discover your town’s darkest secrets in Usual June, a third person action game about summer break and the end of the world.

Why this matters: an ad platform used generative AI to change an indie game’s art – and the developer had no control

Finji, the indie studio behind Night in the Woods, Tunic and the upcoming Usual June, says it discovered in early February that TikTok was serving AI-altered versions of its paid ads – including a version that sexualized and racialized Usual June’s Black protagonist – without the publisher’s consent. The kicker: Finji claims it had TikTok’s AI ad tools turned off, can’t view or edit the altered variants, and has been stuck in an escalating but unresolved support ticket with the platform.

- Finji says TikTok’s Smart Creative / Automate Creative and other automated programs altered its ad assets without permission.

- The altered Usual June image reportedly exaggerates and sexualizes the Black protagonist, invoking harmful stereotypes; Finji learned about it via player screenshots and comments.

- TikTok has acknowledged the incident internally but has not removed the ads or given Finji a clear fix; an opt-out blocklist was offered with no guarantee.

What happened — the mechanics and the mess

Multiple gaming outlets report the same chain: Finji runs TikTok ads but had “Smart Creative” and similar generative features switched off. Fans began flagging strange slideshow-style variants of official video creatives; screenshots showed one modified image that stretches the original Usual June cover art downward to depict the protagonist in a bikini bottom, oversized hips and thigh-high boots — a depiction Finji says never appears in the game and that reads like a racialized, sexualized stereotype.

TikTok’s Smart Creative and Automate Creative tools are designed to remix assets and automatically optimise ad performance — but Finji insists it did not opt in. After Finji provided screenshots, TikTok initially told the studio it saw no sign of AI-modified assets, then acknowledged the evidence and said it was escalating. Subsequent replies described the ads as part of a “catalog ads” initiative that mixes carousel and video assets and touted a potential 1.4x ROAS lift — while offering an “opt-out blocklist” that isn’t guaranteed.

Where platforms and publishers collide

This is the kind of collision the industry warned about when ad platforms started turning to generative models: algorithmic remixing can produce content that’s offensive, inaccurate, or simply not the brand the buyer paid for. For an indie label like Finji — where tone, representation and small-audience trust are everything — the reputational cost is real. Rebekah Saltsman, Finji’s CEO, called TikTok’s responses “shockingly inadequate,” and said the studio expected an apology, systemic changes, and a human with authority to resolve the damage. So far, those haven’t materialized.

From the platform side, the defense so far reads like: we didn’t intend to, but your campaign may have been swept into a broader automated experiment that mixes assets to boost performance. That’s a weak answer for creators who paid for specific messaging and imagery — and for communities harmed by racist or sexualized depictions of characters who were never presented that way in the original work.

What this means for developers and advertisers

If you’re a developer or marketer, this should set off three alarms. First: audit your ad dashboards and community channels — creators aren’t always the first to see automated variants. Second: demand transparent controls and logs from platforms showing what automated programs touched an asset. Third: treat “opt-out” as insufficient unless it’s immediate, verifiable and reversible.

TikTok’s unwillingness, according to reports, to guarantee removal or to connect Finji with a senior decision-maker is a worst-case scenario: a paying customer exposed to reputational harm with no clear remediation. Indie studios don’t have legal war-chests or PR teams that can absorb this sort of damage the way a major publisher might.

Want to Level Up Your Gaming?

Get access to exclusive strategies, hidden tips, and pro-level insights that we don't share publicly.

Ultimate Gaming Strategy Guide + Weekly Pro Tips

What’s next — and what to watch for

Finji has publicly stopped running ads on TikTok while the matter remains unresolved, and the story has drawn coverage across GamesIndustry.biz, IGN, Game Developer and TechRaptor. Expect regulators, other creators and ad-buyers to pay attention: this sits at the intersection of AI misuse, content moderation failures and platform business practices.

Two clear outcomes would reduce future incidents: platforms providing full visibility into automated asset changes (who or what edited what, and when), and a reliable, platform-level mechanism to roll back or remove offending automated variants immediately. Until then, indie studios should be cautious about where they spend ad dollars.

TL;DR

TikTok reportedly used generative systems to remix Finji’s ad creatives into racist and sexualized imagery for Usual June, served those versions to users without the publisher’s consent, and has not provided a satisfactory fix. This isn’t just a technical glitch — it’s a content-moderation and platform-control problem that disproportionately hurts smaller developers who rely on careful representation.

Level Up Your Setup

- → Top-rated gaming headsets on Amazon

- → High-refresh gaming monitors on Amazon

- → Gaming chairs on Amazon

- → Gaming desks on Amazon

As an Amazon Associate, FinalBoss earns from qualifying purchases.