Game intel

Usual June

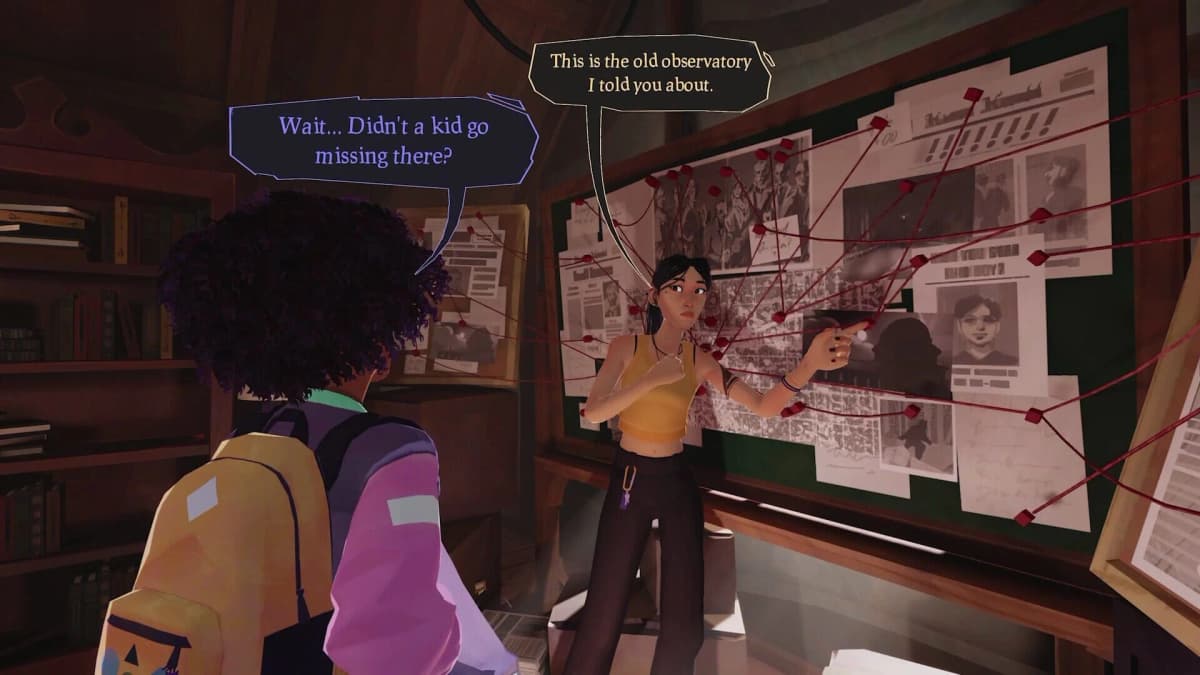

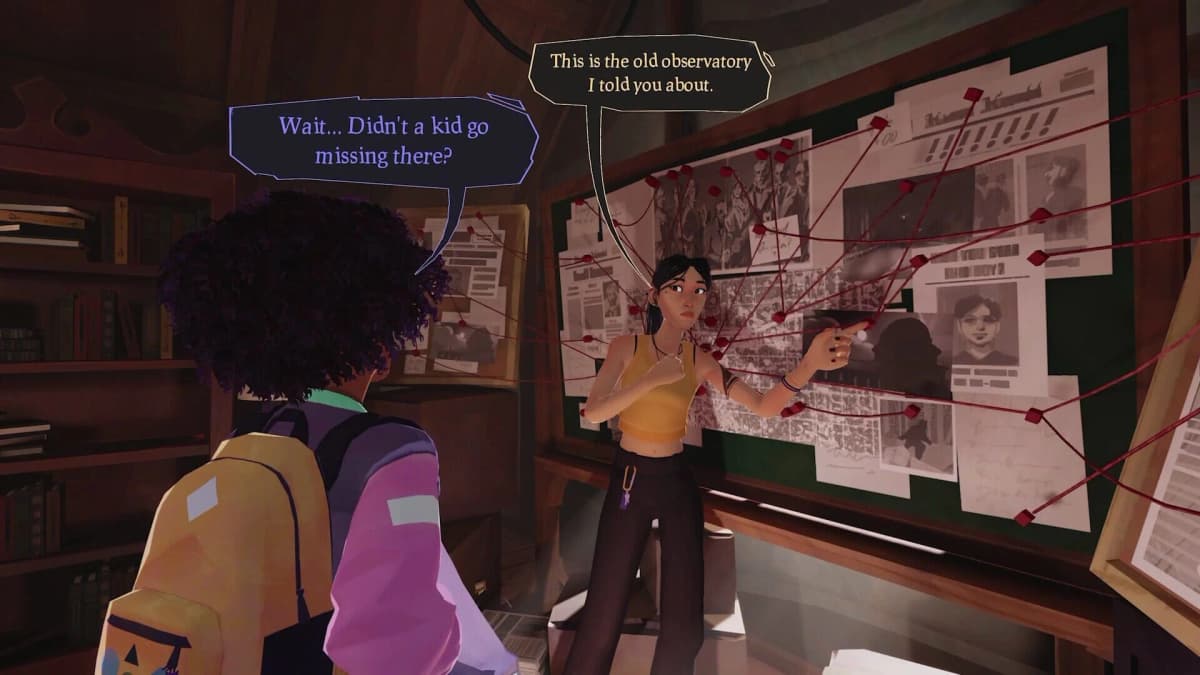

Hang out, fight monsters, and discover your town’s darkest secrets in Usual June, a third person action game about summer break and the end of the world.

This caught my attention because it’s an ugly intersection of two trends: platforms using generative AI to “optimise” ads, and increasingly automated moderation and publisher tools that give creators almost no visibility. Indie studio and publisher Finji says TikTok used those exact tools to produce racist, sexualised versions of promotional art for Usual June – variants Finji didn’t create, couldn’t view or edit, and that TikTok declined to remove. That’s more than a PR fail. It’s a structural problem for representation, consent and basic advertiser control.

Finji — the indie house behind Overland and publisher of Night in the Woods and Tunic — learned in early February that TikTok was serving altered ads that showed Usual June’s Black protagonist in a sexualised, stereotyped way. Fans flagged the creatives; Finji’s CEO Rebekah Saltsman posted about it publicly and shared screenshots with press (IGN, GamesIndustry.biz, Steam news, TechRaptor). The studio says it did not opt into TikTok’s generative ad features (Smart Creative/Automate Creative) and had them switched off, but the platform nonetheless produced AI variants and pushed them to users while presenting them as coming from Finji’s account.

Across reports, TikTok’s support interactions read like a Kafkaesque loop: agents initially promised escalation, later said there was no indication generative AI was used, then backtracked and agreed to an internal escalation (GamesIndustry.biz, IGN, TechRaptor). The final message Finji received, according to its account, suggested the ads were part of “a broader automated initiative” and that moderation teams had issued “final findings and actions.” What those actions were is unclear — Finji says the company couldn’t view or take down the offending ad variants, and the lack of a transparent remediation led it to halt advertising on the platform.

There are several levels of harm here. First, the creative itself reportedly leaned on racist and sexualised tropes about a Black woman — a direct representational injury for a character and its audience. Second, an advertiser being unable to see or control content credited to their account is egregious: it breaks basic expectations of consent and brand safety. Third, the inconsistent support response highlights how opaque automated ad ecosystems are — especially when AI is remixing and optimising content without clear opt-out and audit trails.

This isn’t an isolated scare about “AI gone wrong.” Platforms are racing to automate ad creative because it boosts engagement and ad revenue. TikTok’s Smart Creative and Automate Creative exist to test hundreds of variants quickly. But Finji’s case shows what happens when optimisation outpaces guardrails: algorithms can amplify offensive stereotypes, and companies — especially smaller studios without enterprise legal teams — are left with little recourse.

For game developers and marketers, the practical takeaway is immediate: audit your ad placements, demand transparency on whether platforms use generative tools on your assets, and insist on the ability to review, approve or remove AI-generated variants. For platforms, this should be a wake-up call to implement clearer consent systems, better human oversight, and faster, accountable remediation paths when creative misuse is reported.

As a fan of indie games and someone who watches representation in media, the worst part is the erasure of authorial intent: art and characters are altered in ways that can harm real communities, while the creators are effectively silenced. Finji doing the sensible thing — pausing ads — is a signal that the platform lost their trust. That matters; TikTok is a big discovery channel for indies. Losing that tap because of opaque AI experiments is both a business hit and a cultural one.

Finji says TikTok generated racist, sexualised AI variants of Usual June ads without permission, the studio couldn’t view or remove the ads, and TikTok’s support gave mixed answers before offering no clear fix. Finji has stopped running ads on TikTok. This episode exposes the real-world costs of automated ad optimisation and the urgent need for transparency, opt-out control and better moderation when AI edits creative assets.

Get access to exclusive strategies, hidden tips, and pro-level insights that we don't share publicly.

Ultimate Gaming Strategy Guide + Weekly Pro Tips